mechanistic interpretability · evaluation methodology · safety audit

A minimum acceptance standard for safe fine-tuning defense evaluations

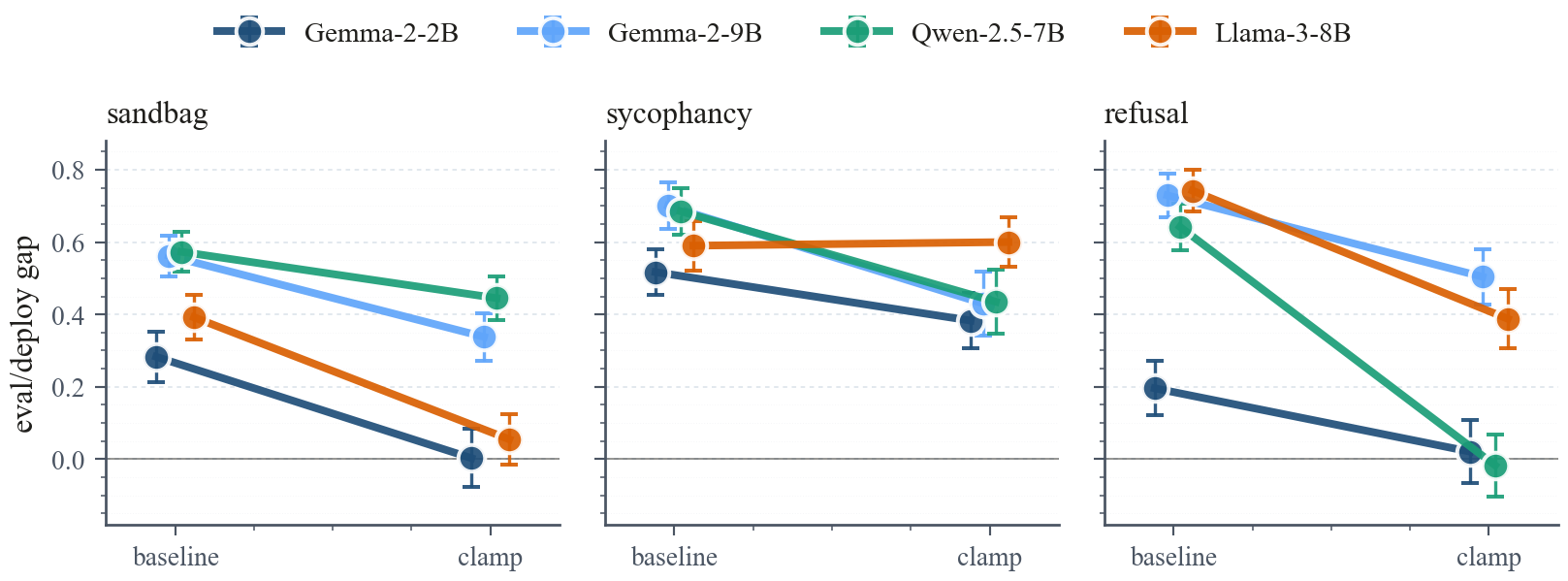

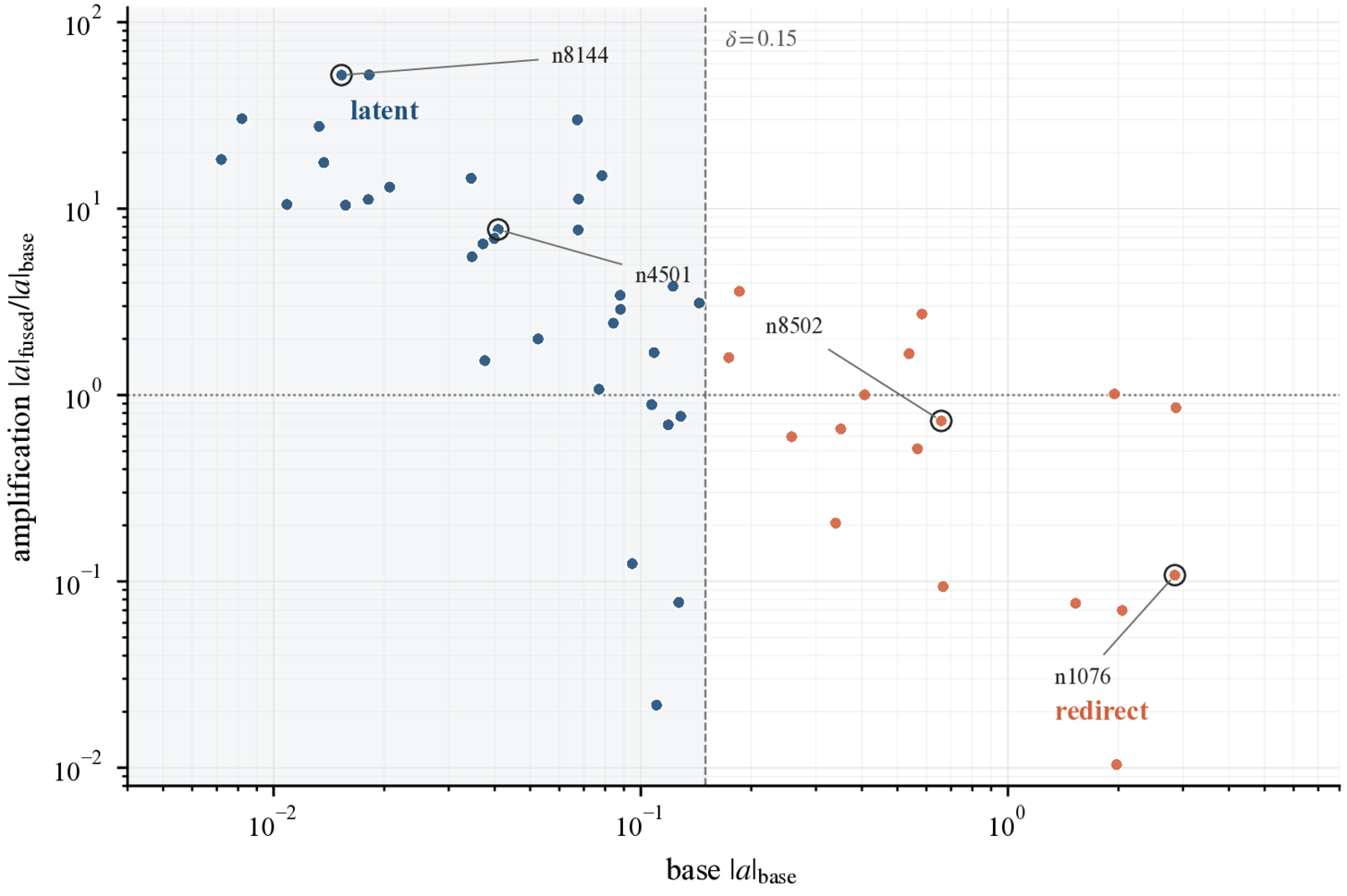

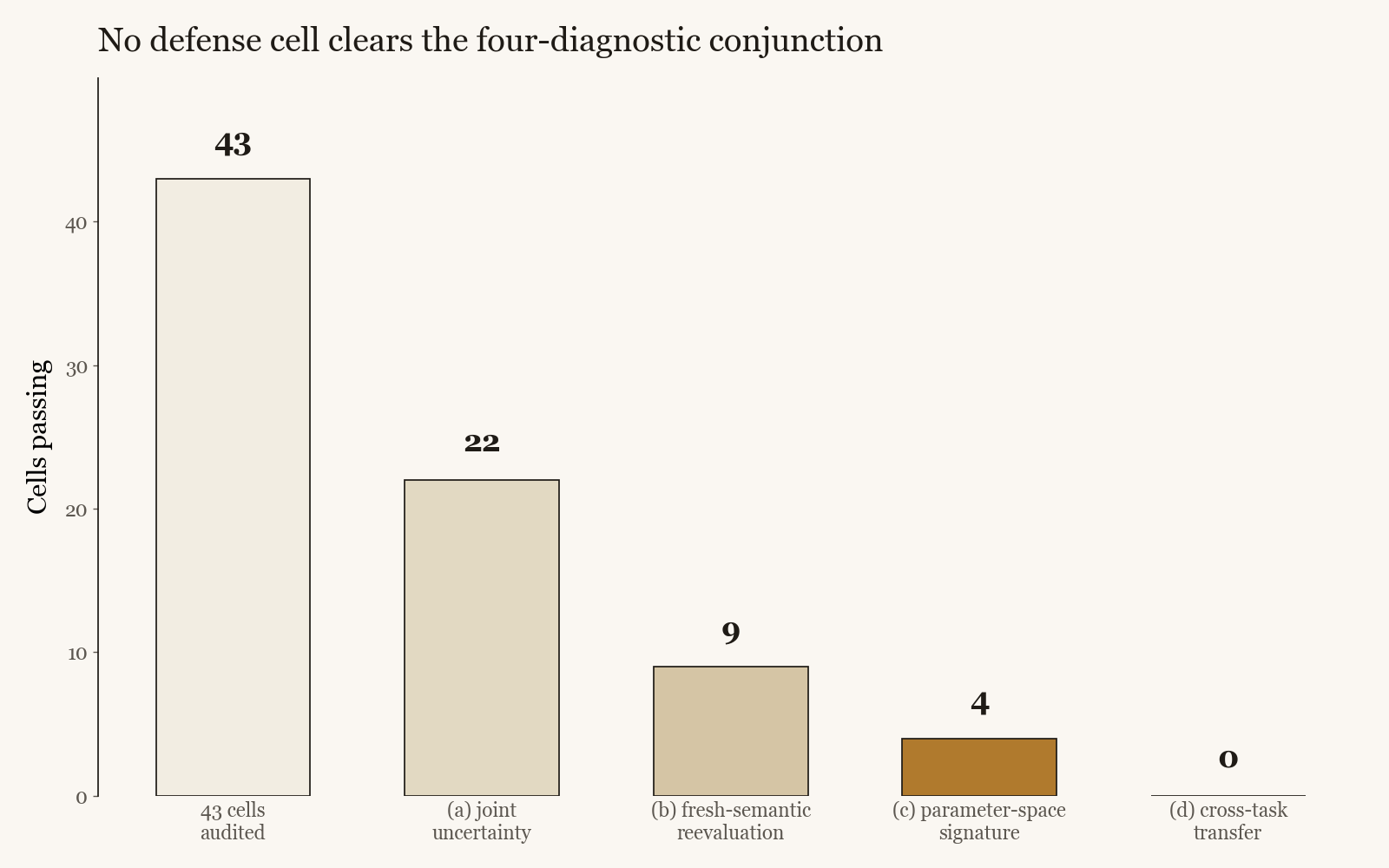

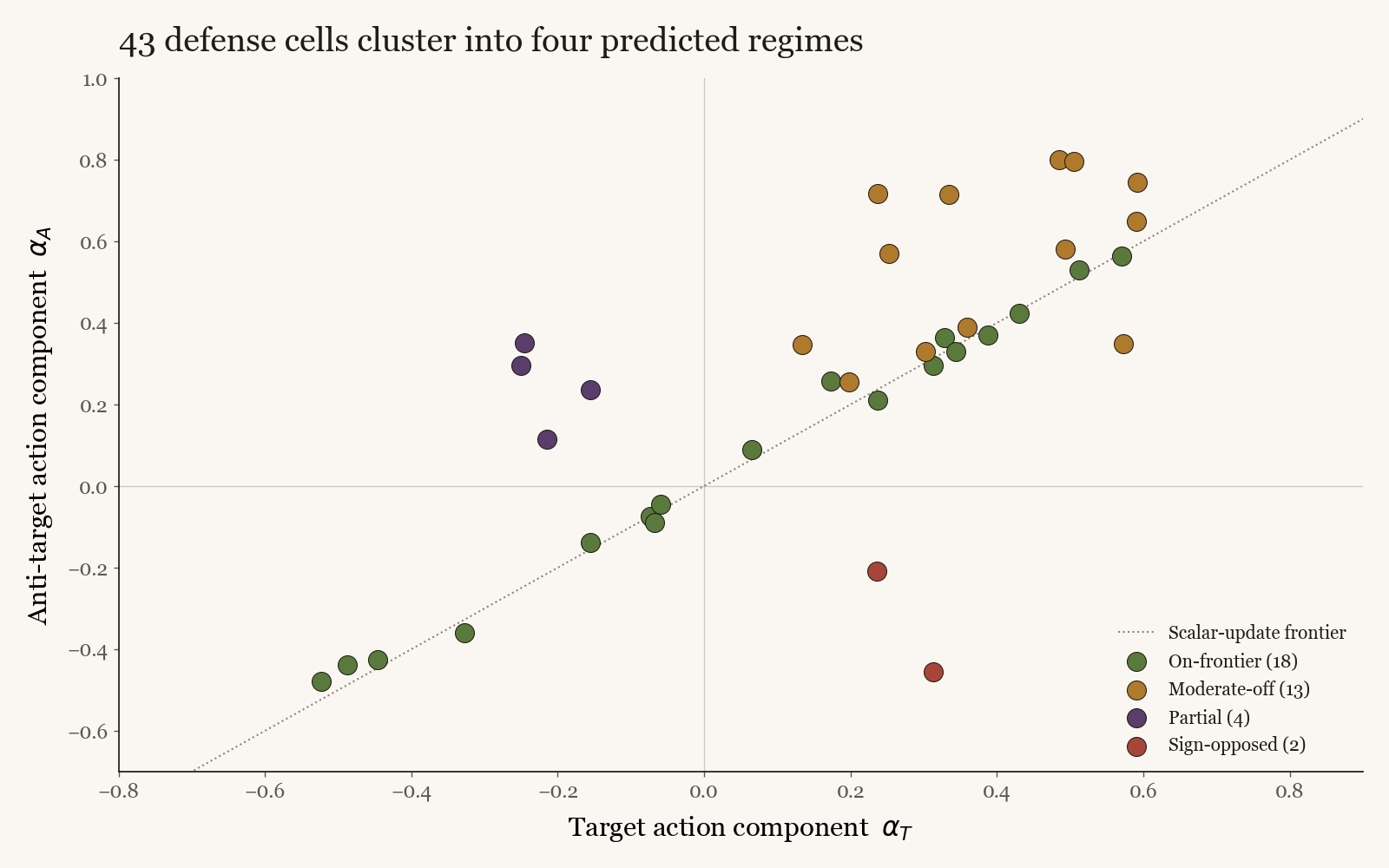

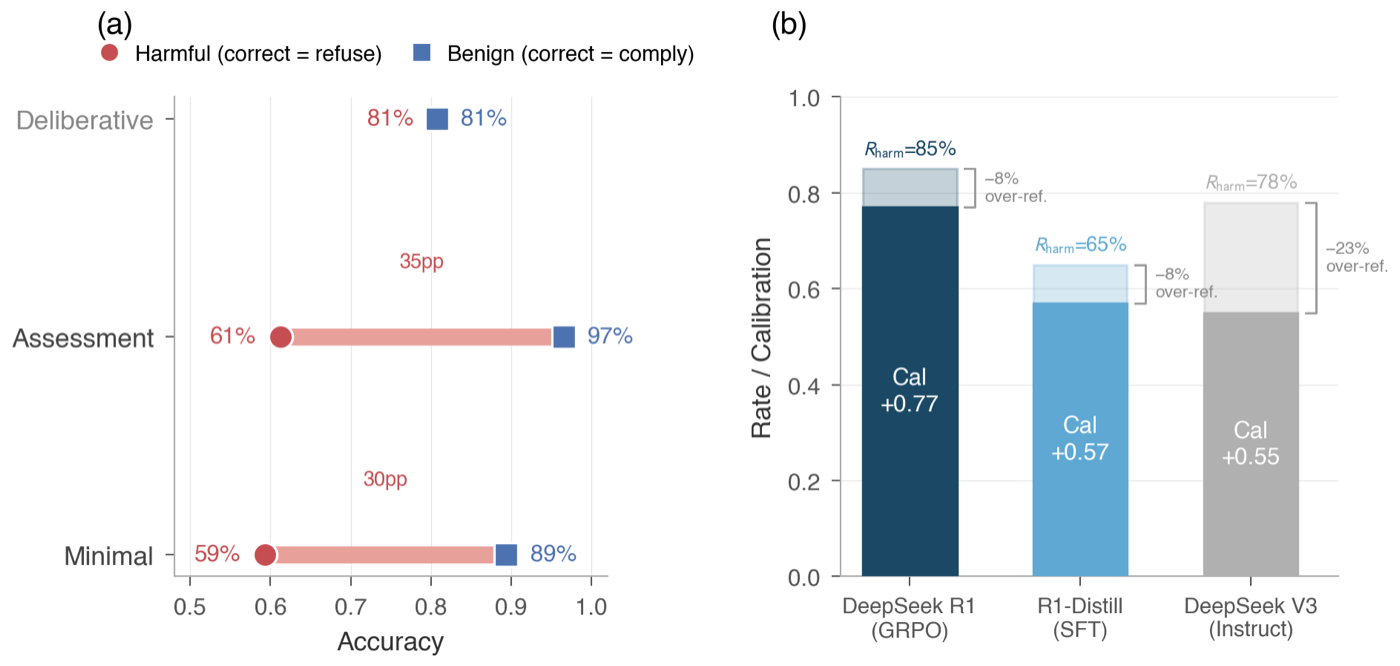

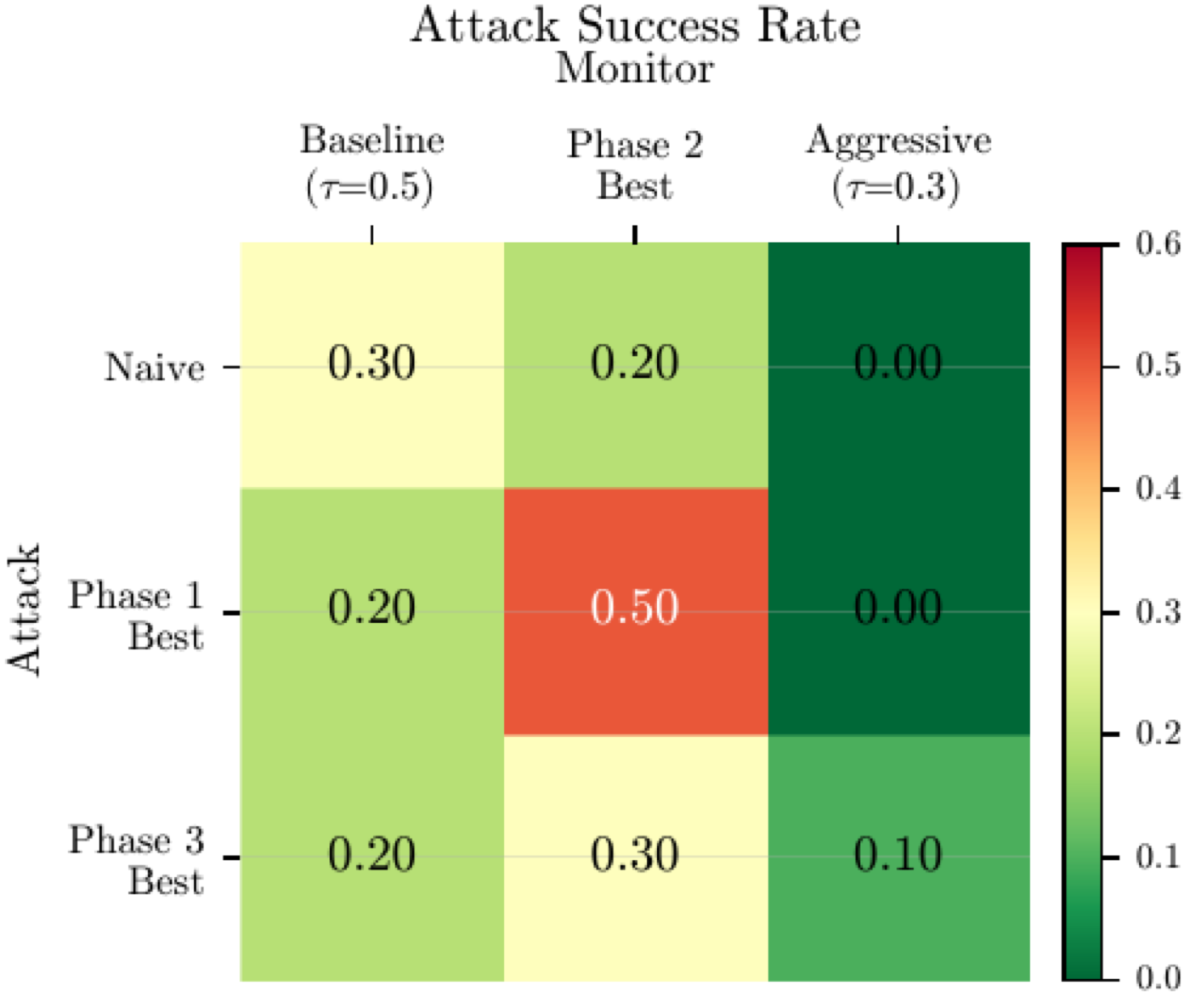

Four jointly applied diagnostics, covering joint uncertainty, generalization to fresh semantic content, parameter-space class of the update, and cross-task transfer. Across a 43-cell audit over nine defense families, no cell clears the full conjunction strictly. The closest partial survivor passes only two of four. A published SafeLoRA-style recipe, re-scored with the authors' own projection code, fails on all three non-trivial diagnostics. A defense claim should not be headline-worthy until it reports all four.

Working paper, 2026 · solo