Scaling Within an Epistemic Horizon: Observational Equivalence and Creator Hypotheses

Headline result

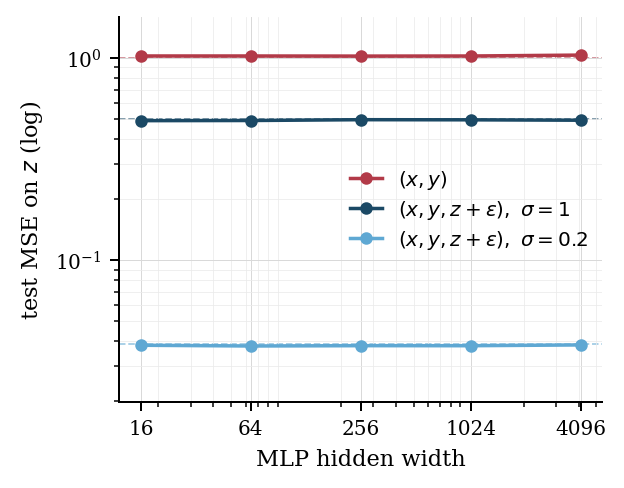

Empirical scaling laws describe loss decline within a fixed epistemic horizon. They cannot, in principle, decide between worlds that are observationally equivalent under that horizon. Scaling sharpens estimation; it does not enlarge the horizon. Creator hypotheses, hidden-geometry recovery, and consciousness questions all share this structure.

Method in brief

Treat the learner as the family of probability laws its accessible channel induces on evidence, indexed by experimental policy. Reduce empirical identifiability to factorization through that family. Define an epistemic horizon as the equivalence class of worlds that agree on every such law. Show that scale within a fixed horizon improves estimation but does not collapse the equivalence class. Apply to text-only language models, hidden geometry recovery, and creator hypotheses.

Key Contributions

- Treats an embedded learner as the family of probability laws its accessible channel induces on evidence (one law per experimental policy), and shows that a target property is empirically identifiable only if it factors through that family.

- Defines an epistemic horizon as the set of worlds that agree on every accessible policy-indexed law, and proves that scale acts within a horizon rather than across it: model size, data, and compute cannot decide between observationally equivalent worlds without richer access or substantive extra assumptions.

- Applies the framework to hidden geometry, text-only language models, and the limiting case of whether the world we inhabit was created by an intelligence greater than ours; argues that the question is well-posed but not empirically settleable from inside a fixed interface.

- Companion to Identifiable Abstractions from Observation and Intervention (the formal source of identifiability) and dual to Every Mirror Has a Blind Spot (the internal-self version of the same horizon argument).

Abstract

Empirical scaling laws show that predictive loss declines smoothly with model size, data, and compute, inviting the stronger claim that enough scale can recover progressively deeper latent structure. We isolate a limit on that extrapolation: an embedded learner is the family of probability laws its accessible channel induces on evidence, one law per experimental policy it can run, and a target property is empirically identifiable only if it factors through that family. Worlds that agree on every such law form an epistemic horizon, and scale acts within a horizon rather than across it, so no amount of model size, data, or compute can decide between observationally equivalent worlds absent richer access or substantive extra assumptions. We apply the framework to hidden geometry, text-only language models, and the limiting question of whether our world was created by an intelligence greater than ours. The result neither rules out creator hypotheses nor settles questions of consciousness; it shows that scaling by itself cannot turn observational equivalence into scientific knowledge.