Machine Learning in Gastrointestinal Tract Imaging: A Comprehensive Review of Techniques and Applications

Headline result

Maps algorithmic trends across endoscopy, colonoscopy, and wireless capsule endoscopy literature, and quantifies the dataset-size to performance relationship that bounds clinically credible deployment of deep-learning models in GI imaging.

Method in brief

Systematic review of deep-learning approaches across three GI imaging modalities (endoscopy, colonoscopy, wireless capsule endoscopy), with the relationship between dataset size and reported model performance quantified to identify data-sufficiency thresholds. Translational enablers (federated learning for data privacy, explainable AI for clinician trust) are evaluated within established ethical frameworks.

Key Contributions

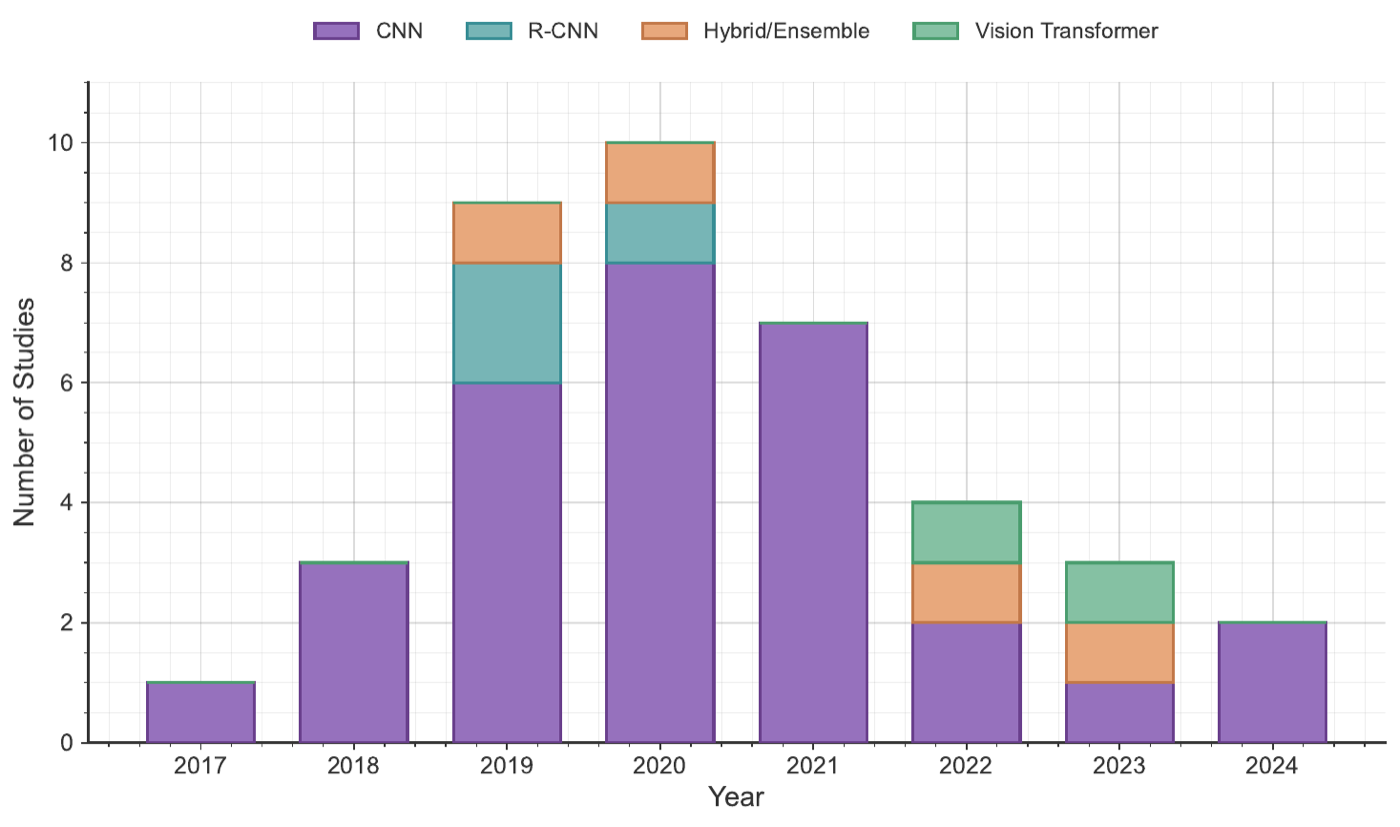

- Systematic mapping of machine-learning algorithmic trends to specific GI imaging techniques (endoscopy, colonoscopy, wireless capsule endoscopy).

- Quantifies the relationship between dataset size and model performance to identify data-sufficiency thresholds for clinical deployment.

- Evaluates translational enablers such as federated learning for data privacy and explainable AI for clinician trust, within established ethical frameworks.

- Establishes a structured, data-informed baseline that goes beyond descriptive surveys, with explicit guidance for future clinical AI innovation.

Abstract

Gastrointestinal (GI) imaging modalities including endoscopy, colonoscopy, and wireless capsule endoscopy provide vital diagnostic information, yet their manual interpretation remains laborious. Although deep learning approaches, particularly convolutional neural networks (CNNs), hybrid architectures, and transformer-based models, have achieved high accuracy in this domain, their clinical adoption is impeded by data limitations and trust concerns. The current study (1) systematically maps algorithmic trends to specific GI imaging techniques, (2) quantifies the relationship between dataset size and model performance to identify data sufficiency thresholds, and (3) evaluates translational enablers, such as federated learning for data privacy and explainable AI for clinician trust, within established ethical frameworks. By situating our analysis at the intersection of methodological rigor, quantitative assessment, and clinical applicability, this work aims to establish a structured, data-informed baseline that advances beyond purely descriptive surveys and guides future innovation toward impactful clinical AI deployment.